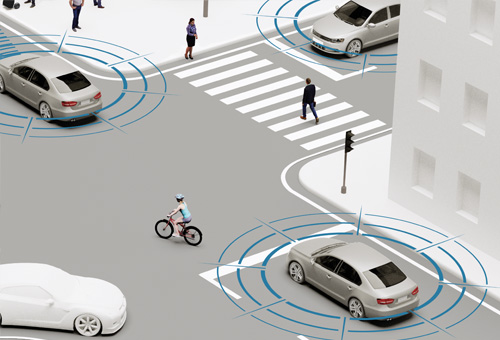

Vulnerable road users predominantly communicate in road traffic by using poses or gestures. This is, on the one hand, a targeted activity that consciously signalises intentions by means of gesture. Cyclists, for example, use hand signals to indicate their intention to change lanes or to make a turn at an intersection. On the other hand, vulnerable road users also make unconscious gestures that help make other road users capable of drawing conclusions about what they intend to do. This can, for example, be the direction that a pedestrian looks in, which indicates that the pedestrian has seen an approaching vehicle. In order to be able to anticipate the behaviour of vulnerable road users, automated vehicles must be able to reliably identify these types of actions

and gestures and interpret them correctly. These are the objectives pursued by the ‘Interaction with vulnerable road users’ subproject. The starting point for a more exhaustive behavioural analysis is reliable and robust detection of vulnerable road users in road traffic. Stereo cameras and, laser scanners and high resolution radar sensors will be used to realise this. A sensor fusion will combine the information arriving from the different sensor sources to form a consistent image. In particular, the detection of vulnerable road users should also be implemented by deep learning methods, such as deep neuronal networks, and should be able to reliably identify vulnerable road users even under aggravating conditions, such as partial concealment. A focal point of the

subproject is directed at a detailed analysis of vulnerable road users. Using a field study as a basis, relevant characteristics of interaction and a large number of elementary gestures must first be identified and evaluated for their relevance. Using a range of sensor modalities, characteristics that are relevant for identifying intentions and gestures are extracted from the sensor data. These characteristics form the basis for the identification of poses (e.g. position of the head and body, line of sight or the position of the legs) and gestures (such as a hand signal made by a cyclist) exhibited by vulnerable road users.